The Real Reason Huge AI Models Actually Work [Prof. Andrew Wilson]

About

No channel description available.

Video Description

Why can billion-parameter models perform so well without catastrophically overfitting? The answer lies in the mysterious "simplicity bias" that emerges at scale, a core concept of the double descent phenomenon. Professor Andrew Wilson from NYU explains why many common-sense ideas in artificial intelligence might be wrong. For decades, the rule of thumb in machine learning has been to fear complexity. The thinking goes: if your model has too many parameters (is "too complex") for the amount of data you have, it will "overfit" by essentially memorizing the data instead of learning the underlying patterns. This leads to poor performance on new, unseen data. This is known as the classic "bias-variance trade-off" i.e. a balancing act between a model that's too simple and one that's too complex. **SPONSOR MESSAGES** — Tufa AI Labs is an AI research lab based in Zurich. **They are hiring ML research engineers!** This is a once in a lifetime opportunity to work with one of the best labs in Europe Contact Benjamin Crouzier - https://tufalabs.ai/ — Take the Prolific human data survey - https://www.prolific.com/humandatasurvey?utm_source=mlst and be the first to see the results and benchmark their practices against the wider community! — cyber•Fund https://cyber.fund/?utm_source=mlst is a founder-led investment firm accelerating the cybernetic economy Oct SF conference - https://dagihouse.com/?utm_source=mlst - Joscha Bach keynoting(!) + OAI, Anthropic, NVDA,++ Hiring a SF VC Principal: https://talent.cyber.fund/companies/cyber-fund-2/jobs/57674170-ai-investment-principal#content?utm_source=mlst Submit investment deck: https://cyber.fund/contact?utm_source=mlst — Description Continued: Professor Wilson challenges this fundamental belief (fearing complexity). He makes a few surprising points: **Bigger Can Be Better**: massive models don't just get more flexible; they also develop a stronger "simplicity bias". So, if your model is overfitting, the solution might paradoxically be to make it even bigger. **The "Bias-Variance Trade-off" is a Misnomer**: Wilson claims you don't actually have to trade one for the other. You can have a model that is incredibly expressive and flexible while also being strongly biased toward simple solutions. He points to the "double descent" phenomenon, where performance first gets worse as models get more complex, but then surprisingly starts getting better again. **Honest Beliefs and Bayesian Thinking**: His core philosophy is that we should build models that honestly represent our beliefs about the world. We believe the world is complex, so our models should be expressive. But we also believe in Occam's razor—that the simplest explanation is often the best. He champions Bayesian methods, which naturally balance these two ideas through a process called marginalization, which he describes as an automatic Occam's razor. TOC: [00:00:00] Introduction and Thesis [00:04:19] Challenging Conventional Wisdom [00:11:17] The Philosophy of a Scientist-Engineer [00:16:47] Expressiveness, Overfitting, and Bias [00:28:15] Understanding, Compression, and Kolmogorov Complexity [01:05:06] The Surprising Power of Generalization [01:13:21] The Elegance of Bayesian Inference [01:33:02] The Geometry of Learning [01:46:28] Practical Advice and The Future of AI Prof. Andrew Gordon Wilson: https://x.com/andrewgwils https://cims.nyu.edu/~andrewgw/ https://scholar.google.com/citations?user=twWX2LIAAAAJ&hl=en https://www.youtube.com/watch?v=Aja0kZeWRy4 https://www.youtube.com/watch?v=HEp4TOrkwV4 TRANSCRIPT: https://app.rescript.info/public/share/H4Io1Y7Rr54MM05FuZgAv4yphoukCfkqokyzSYJwCK8 REFS: Deep Learning is Not So Mysterious or Different [Andrew Gordon Wilson] https://arxiv.org/abs/2503.02113 Bayesian Deep Learning and a Probabilistic Perspective of Generalization [Andrew Gordon Wilson, Pavel Izmailov] https://arxiv.org/abs/2002.08791 Compute-Optimal LLMs Provably Generalize Better With Scale [Marc Finzi, Sanyam Kapoor, Diego Granziol, Anming Gu, Christopher De Sa, J. Zico Kolter, Andrew Gordon Wilson] https://arxiv.org/abs/2504.15208

AI Model Mastery Kit

AI-recommended products based on this video

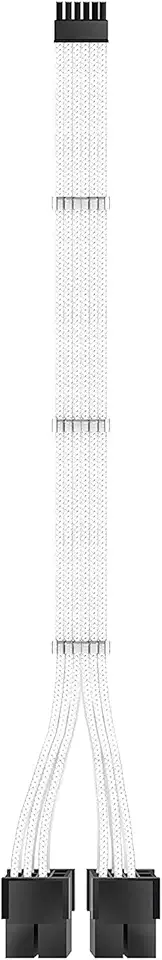

EZDIY-FAB RTX 3000 Series 12 Pin to Dual 8 Pin PCIe Sleeved Extension Cable 300 MM- Connector for NVIDIA Ampere GEFORCE RTX 3060ti 3070 3080 FE Funder Edition- White

OWC 64GB DDR5 4800 PC5-38400 CL40 2Rx4 288-pin 1.1V ECC Registered RDIMM Memory RAM Module Upgrade Compatible with Dell PowerEdge R660XS R760XS

OWC 64GB DDR5 4800 PC5-38400 CL40 2Rx4 288-pin 1.1V ECC Registered RDIMM Memory RAM Module Upgrade Compatible with Dell PowerEdge XE9680

OWC 64GB DDR5 4800 PC5-38400 CL40 2Rx4 288-pin 1.1V ECC Registered RDIMM Memory RAM Module Upgrade Compatible with Dell PowerEdge R6625 R760 R7615 R7625

OWC 64GB DDR5 4800 PC5-38400 CL40 2Rx4 288-pin 1.1V ECC Registered RDIMM Memory RAM Module Upgrade Compatible with Dell PowerEdge HS5610 HS5620

Magnetic Nasal Strips Starter Kit: Comfortable Nasal Breathing Support for Sleep, Helps Reduce Snoring Noise, Includes 60 Tabs (30 Uses) with 4 Sizes

Environet Hydroponic Growing Kit, Self-Watering Mason Jar Herb Garden Starter Kit Indoor, Windowsill Herb Garden, Grow Your Own Herbs from Organic Seeds (Basil)

Herb Garden Planter Indoor Kit 21Pcs Kitchen Herb Garden Starter Kit Growing Kit Including Wooden Box Burlap Pots Soil Discs Gardening Tools Unique Easter Birthday Christmas Gift Ideas for Women Mom

Bonsai Starter Kit – 1x Bonsai Tree | Complete Indoor Starter Kit for Growing Plants with Bonsai Seeds, Tools & Planters – Gardening Gifts for Women & Men

TP-Link Tapo 2K Pan/Tilt Indoor Security WiFi Camera, Baby & Pet Camera w/ 360° Motion Tracking, 2-Way Audio, Night Vision, Cloud & Local Storage (Up to 256 GB), Works w/ Alexa & Google (Tapo C210)

![Abstraction & Idealization: AI's Plato Problem [Mazviita Chirimuuta]](https://imgz.pc97.com/?width=500&fit=cover&image=https://i.ytimg.com/vi/yq318DIwPqw/hqdefault.jpg)

![Why Every Brain Metaphor in History Has Been Wrong [SPECIAL EDITION]](https://imgz.pc97.com/?width=500&fit=cover&image=https://i.ytimg.com/vi/pO0WZsN8Oiw/hqdefault.jpg)

![AutoGrad Changed Everything (Not Transformers) [Dr. Jeff Beck]](https://imgz.pc97.com/?width=500&fit=cover&image=https://i.ytimg.com/vi/9suqiofCiwM/hqdefault.jpg)

![Why Scientists Can't Rebuild a Polaroid Camera [César Hidalgo]](https://imgz.pc97.com/?width=500&fit=cover&image=https://i.ytimg.com/vi/vzpFOJRteeI/hqdefault.jpg)

![Why High Benchmark Scores Don’t Mean Better AI [SPONSORED]](https://imgz.pc97.com/?width=500&fit=cover&image=https://i.ytimg.com/vi/rqiC9a2z8Io/hqdefault.jpg)

![The Mathematical Foundations of Intelligence [Professor Yi Ma]](https://imgz.pc97.com/?width=500&fit=cover&image=https://i.ytimg.com/vi/QWidx8cYVRs/hqdefault.jpg)

![Tensor Logic "Unifies" AI Paradigms [Pedro Domingos]](https://imgz.pc97.com/?width=500&fit=cover&image=https://i.ytimg.com/vi/4APMGvicmxY/hqdefault.jpg)

![He Co-Invented the Transformer. Now: Continuous Thought Machines [Llion Jones / Luke Darlow]](https://imgz.pc97.com/?width=500&fit=cover&image=https://i.ytimg.com/vi/DtePicx_kFY/hqdefault.jpg)

![We Built Calculators Because We're STUPID! [Prof. David Krakauer]](https://imgz.pc97.com/?width=500&fit=cover&image=https://i.ytimg.com/vi/dY46YsGWMIc/hqdefault.jpg)

![Why Humans Are Still Powering AI [Sponsored] - Phelim Bradley](https://imgz.pc97.com/?width=500&fit=cover&image=https://i.ytimg.com/vi/R11ESdfVX64/hqdefault.jpg)

![The Universal Hierarchy of Life - Prof. Chris Kempes [SFI]](https://imgz.pc97.com/?width=500&fit=cover&image=https://i.ytimg.com/vi/iwClZ-7OweY/hqdefault.jpg)

![Google Researcher Shows Life "Emerges From Code" [Blaise Agüera y Arcas]](https://imgz.pc97.com/?width=500&fit=cover&image=https://i.ytimg.com/vi/rMSEqJ_4EBk/hqdefault.jpg)

![AI training data will never be fully synthetic [SPONSORED]](https://imgz.pc97.com/?width=500&fit=cover&image=https://i.ytimg.com/vi/cnxZZTl1tkk/hqdefault.jpg)

![AI Agents can write 10,000 lines of hacking code in seconds [Dr. Ilia Shumailov]](https://imgz.pc97.com/?width=500&fit=cover&image=https://i.ytimg.com/vi/aoX_pGQMbEM/hqdefault.jpg)