Build AI App with FULL WEB ACCESS 🌐 (Local + Python GUI + MCP + LangChain Tutorial )

Python Simplified

@pythonsimplifiedAbout

Hi everyone! My name is Mariya and I'm a software developer from Sofia, Bulgaria. I film programming tutorials about Computer Science Concepts, GUI Applications, Machine Learning and Artificial Intelligence, Automation and Web Scraping, Data Science and even Math! 🤓 I'm here to help you with your programming journey (in particular - your Python programming journey 😉) and show you how many beautiful and powerful things we can do with code! 💪💪💪

Video Description

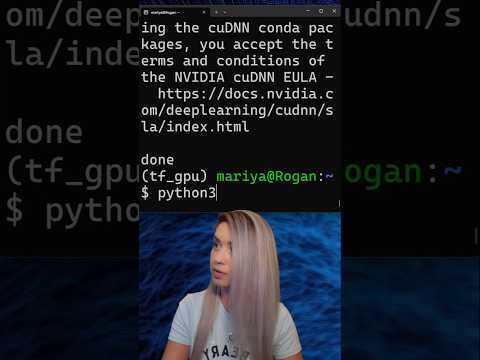

What if your own language model could search the internet and answer real-time questions? 🌐 Just like ChatGPT, but running locally on your system, inside your application, where you have full control! That’s exactly what we will build today! 🛠️🔥 In this dev-focused step by step walkthrough, we’ll build a local AI app using Python, LangChain, and the Model Context Protocol (MCP) 📜 — a new open protocol that connects LLMs to real-time data from the internet and other external resources (like files, apps, databases and web pages). 🛠️ What You’ll Build: - A local Python app where an LLM (via Ollama) can browse the live web. - An async MCP client that can access tools like web_data_reddit_posts, and web_data_linkedin_person_profile. - A Streamlit GUI with user input, real-time answers, and automatic tool-switching. - A setup that uses Bright Data MCP to handle CAPTCHAs, JS rendering, proxies & more. 🔍Tech Stack: - Python 3.12 🐍 - LangChain (with MCP adapters and Ollama LLM) 🔗 - Ollama (running Gemma3 locally) 🧠 - Bright Data MCP (scraping, proxies, browser API for LLMs) 🌐 - Streamlit for a fast GUI 💻 💡 You’ll Learn: - What MCP is and how it brings real-time data into LLMs without training or fine-tuning. - How to structure MCP clients in Python with LangChain’s async tooling. - How to run everything locally: no OpenAI keys, no cloud lock-in, just raw Python + Node. - How to cache responses, route URLs to tools, and maintain clean prompts. 👨💻 Who This Is For: - Build AI agents and want direct control over context. - Need LLMs that can reason over live, external data. - Are done with SaaS restrictions and want local, hackable AI. ⏰ Timestamps: 01:03 - What's MCP? 05:28 - Setup Web Unlocker Zone [Bright Data] 06:21 - Setup API Key [Bright Data] 06:47 - Specify API Key in .bashrc 08:12 - Run MCP Server 09:38 - Error: Duplicate Zone Name 10:20 - MCP Client Setup [Langchain] 16:04 - Asynchronous MCP Requests 18:40 - Handle MCP Tools 22:28 - Ollama CLI Setup to Run AI Models Locally 24:24 - Langchain Ollama 26:18 - Pass MCP Output into LLM Prompt 29:39 - Design GUI [Streamlit] 31:21 - Streamlit Callback 34:38 - Combine Multiple MCP Tools [Reddit & LinkedIn] 36:16 - Further Development Ideas 🚨 IMPORTANT LINKS 🚨 ------------------------------------------------------------------------ 🎁 Get $10 Free Bright Data Credits: https://brdta.com/pythonsimplified_mcp ⭐ Official Bright Data MCP GitHub: https://github.com/brightdata/brightdata-mcp 📦 Full Tutorial Code GitHub (Simple MCP App): https://github.com/MariyaSha/simple_mcp_app ------------------------------------------------------------------------ 👍 Like if you're into serious AI tooling 🔔 Subscribe for real-world AI engineering tutorials 💬 Comment if you want to see this connected to Discord, GitHub, or terminal agents #python #pythonprogramming #LLM #LangChain #WebScraping #Ollama #MCP #LocalLLM #Streamlit #AgenticAI #coding #software

No Recommendations Found

No products were found for the selected channel.